Is forecast accuracy driving your decisions? 5 diagnostic questions for planning leaders

Mar 26, 2026 • 8 min

While every retailer measures forecast accuracy, few can explain how those measurements connect to the orders their systems place every day. That gap between tracking a number and using it to make better inventory decisions is where most of the value gets lost.

These five questions stem from a RELEX webinar on forecast accuracy featuring Brian Kilcourse, Managing Partner at RSR Research, a firm that has studied retail technology adoption for nearly two decades. Together with RELEX experts, Brian explored why accuracy scores improve while inventory outcomes don’t, and what it actually takes to close that gap. They can help you figure out whether your accuracy measurement is shaping your strategic supply chain decisions or just filling a dashboard.

“Forecasting has moved from what I used to think of as a project, something we did periodically, to something that is running constantly in the background. You’re comparing it to your current performance and making adjustments as necessary.”

Brian Kilcourse, Managing Partner, RSR Research

1: Are you measuring at the level where decisions happen?

Red flag: You’re tracking accuracy monthly at the category level, but replenishment orders are placed daily at the item-location level. The measurement and the decision don’t match.

The real cost: Category-level accuracy scores can look healthy while individual stores run out of stock or pile up excess inventory. A 92% accuracy rating at the category-month level might mask the fact that half of your item-location forecasts are off by enough to generate the wrong order quantity.

Planners spend time chasing accuracy improvements that never actually change what gets ordered. If the lead time for a product is three weeks, but you’re measuring accuracy at a one-month lag, you’re evaluating the forecast after the ordering decision has already been made, which is too late to learn anything useful or drive a better decision.

The pressure to get this right is growing. RSR Research finds that 99% of retailers are at least considering localization of their assortments, driven by consumers who expect relevant, store-specific inventory rather than uniform ranges. Aggregate-level accuracy measurement can’t support localized replenishment decisions.

The solution: Match your measurement granularity and timing to the decisions that matter most. If replenishment orders are placed daily at the store-SKU level, measure accuracy at that level. If the lead time is 3 weeks, compare the forecast version from 3 weeks ago with what actually sold. Category-month metrics still have a place in S&OP conversations, but they shouldn’t be the only lens.

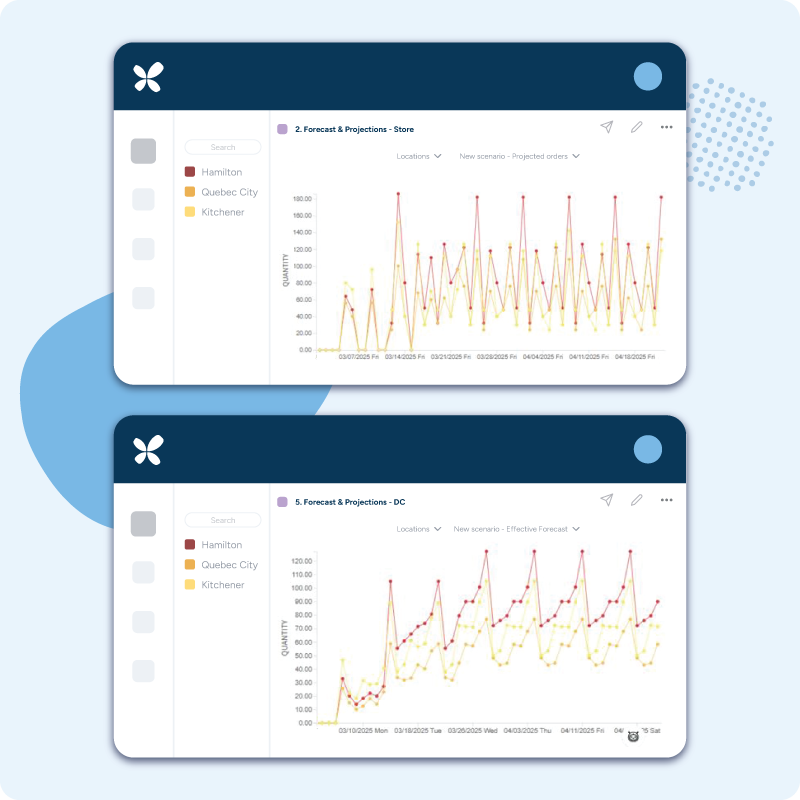

RELEX calculates forecast accuracy at the level where decisions are made, such as store-SKU-day for replenishment, or DC-SKU-week for distribution center planning, and aligns the measurement horizon to actual lead times. This means the accuracy scores planners see directly reflect the forecasts that generated their orders.

2: Are you tracking bias as seriously as average error?

Red flag: You track MAPE or average error, but not directional bias. You know the forecast is off, but you don’t know whether it’s consistently too high or too low.

The real cost: Over-forecasting and under-forecasting have very different consequences, but most average error metrics treat them as identical. Over-forecasting creates excess inventory and markdowns and directly increases spoilage for fresh categories. Under-forecasting leads to empty shelves, lost sales, and customers who choose to shop elsewhere.

A small bias at the item level can seem insignificant on its own. But repeat that same 2–3% over-forecast across hundreds of stores and thousands of SKUs, and it quietly ties up capital in stock that didn’t need to be there. Roll it up to the distribution center, and the imbalance becomes obvious. But by then, the damage is done.

The solution: Track directional forecast bias alongside your error metrics, at the product-location level. Look for systematic patterns, such as whether you are consistently over on slow-movers or under on promotions. These patterns point to root causes that average error scores hide completely.

RELEX’s AI-driven platform detects systematic bias patterns automatically and flags them before they compound. Because the platform tracks bias at the product-location level, planners can see exactly where forecasts consistently run high or low, and whether those biases are large enough to distort replenishment orders.

3: Do planners know which forecast errors matter most?

Red flag: All forecast errors get flagged equally, regardless of whether they would change an order or have any business impact.

The real cost: A planner adjusts a forecast from 4.2 to 4.8 units. The case pack is 12. Both forecasts generate the same order, so the “improvement” created zero value. Meanwhile, a different SKU has an error large enough to add an extra case to every order, but it’s buried in the same exception list.

Without a way to distinguish errors that cross ordering thresholds from those that don’t, planners spread their attention evenly across problems of wildly different importance. Low-volume items consume the same review time as high-value ones. The result is a lot of effort with little to show for it.

The solution: Focus planner attention on forecast errors that actually change what gets ordered. RELEX developed batch-aware accuracy metrics specifically for this: forecast error and bias in batches measure whether an error was large enough to result in a different order quantity than a perfect forecast would have produced.

The thresholds are straightforward. A cycle error below 0.25 means forecast inaccuracy isn’t affecting orders, so no action is needed. A cycle error above 1.0 indicates inaccuracies are actively distorting order quantities, and this should be the focus. Because these metrics are scale-agnostic, the same thresholds apply whether a product sells 1 unit or 1,000 units per day, so planners can prioritize based on real business impact rather than noise.

4: Can you connect accuracy improvements to business outcomes?

Red flag: Accuracy scores improved, but no one can point to reduced inventory, improved availability, or lower waste as a result.

The real cost: When accuracy improvements don’t visibly translate into business results, leadership begins to question the investment. Forecasting becomes a reporting exercise, a number that goes up or down on a dashboard without anyone understanding what changed operationally.

This disconnect usually occurs because accuracy is measured in a way that doesn’t align with replenishment decisions. A 2-percentage-point improvement in MAPE sounds good, but if those gains came from products where the error was already below the ordering threshold, nothing actually changed downstream.

The solution: Track how accuracy improvements flow through to the metrics that matter: inventory levels, service levels, spoilage rates, and working capital. Batch-based accuracy metrics make this connection concrete, showing when a forecast improvement was large enough to alter an order, and you can trace that order change to its impact on inventory and availability.

RELEX customers see this connection clearly. Europris, a Norwegian discount retailer, centralized replenishment with RELEX and achieved a 17%+ reduction in DC inventory within 18 weeks while improving store availability from 91% to above 97%.

Ametller Origen, a Catalan grocery chain, saw differentiated improvements across segments: a 12-percentage-point increase in non-perishable availability, with a 9-percentage-point reduction in inventory, and a 4-percentage-point increase in refrigerated goods availability, alongside a 24-percentage-point reduction in inventory.

The scale of the opportunity is larger than most planning teams realize. For a retailer carrying $1 billion in safety stock, a 10% reduction in forecast error can free up roughly $100 million in working capital. That math holds because safety stock has a direct relationship to forecast error: as error falls, so does the buffer inventory needed to compensate for it.

“If we can more efficiently use our capital to put the right inventory in the right place at the right time, we can do an awful lot with that money that we couldn’t do before.”

Brian Kilcourse, Managing Partner, RSR Research

5: Who owns accuracy measurement vs. who owns replenishment decisions?

Red flag: Demand planning measures accuracy. Supply chain makes replenishment decisions. The two teams coordinate through reports and meetings, not shared systems.

The real cost: When forecast accuracy lives in one team’s reporting and replenishment execution lives in another’s, accuracy measurement becomes a document nobody acts on. Better forecasts don’t automatically translate into better orders, because the handoff between teams introduces delays, misinterpretations, and competing priorities.

The problem gets worse during promotions and seasonal shifts, when accuracy matters most. One team updates the forecast, the other doesn’t see it in time, and the replenishment system runs on out-of-date numbers. The result: stockouts during campaigns, excess inventory afterwards, and both teams pointing at each other.

The solution: Accuracy measurement and replenishment execution need to occur in the same system, using the same data. When they’re connected, forecast improvements flow directly into the next order calculation, without manual handoffs or version-control issues.

RELEX unifies forecasting and replenishment on a single platform. The same ML-generated demand signal that accuracy metrics are calculated against also drives order proposals. RELEX AI agents diagnose, recommend, and execute decisions for planners who see accuracy scores alongside the orders that those forecasts produced, so they can identify where improvements will have the most impact on availability and inventory. When both functions share a view of demand, capacity, and inventory positions, the silo problem disappears.

“AI helps us move from managing everything as if it were equally important to managing what’s truly important.”

Brian Kilcourse, Managing Partner, RSR Research

The bottom line

Forecast accuracy only creates value when it changes a replenishment decision.

If your measurement approach doesn’t reflect how orders are actually placed, improving accuracy scores won’t improve inventory outcomes. Three things to focus on:

- Measure where decisions happen. Granularity and timing must match the planning process they’re meant to inform.

- Track bias, not just error. Direction matters as much as magnitude — over-forecasting and under-forecasting create very different problems.

- Make errors actionable. Flag only what would change an order. Everything else is noise.